danbricklin.com/log

|

|

|

|

MIT EECS Department 100th Anniversary

|

This is a report from my attendance at a part of the MIT Electrical Engineering and Computer Science Department's 100th Anniversary celebration, May 23, 2003. I only was able to attend the first morning of the two-day affair (most of the second day was tours).

After a few comments from MIT President Charles Vest, the head of the department, Prof. John Guttag introduced prior head Prof. Paul Penfield. He was head of the department from 1989 to 1999.

Prof. Penfield started by holding up the cane kept by the department head. This cane, symbolizing the decision to keep the computer science department together with the electrical engineering department, was made by the late Prof. Dertouzos. It has a piece of amber (Greek for "electron" and rubbing a piece is a way to produce static electricity) and a half of a quarter (25 cents is "two bits" in slang):

Department Head Prof. John Guttag, Prof. Paul Penfield holding the cane given to the department head along with a close-up photo he showed

Prof. Penfield then gave a speech which chronicled the history of the department and its philosophy. Very interesting. He showed the progression in thinking about electrical engineering teaching, ending with this:

Today's Assumptions and Vision:

Assumptions:

In the days of Prof. Jackson (department head starting in 1907):

From Prof. Brown (department head starting in 1959):

Today's vision:

So, the department's challenge is to "educate students who will help the world understand and wisely embrace rapidly changing technology."

Prof. John Guttag then continued talking about the department. It has approximately 2000 students and 115 faculty. Approximately $20M/year is spent on academics including over 100 TA's per term, and $60M/year on research mostly in interdepartmental labs. The department's mission includes education first and foremost. There is an emphasis on breadth in EE and CS. The purpose of that breadth is to embolden the students to keep learning throughout their careers, and not just continue to practice a craft as it was taught to them. Knowing how my MIT friends and I love to keep learning, and have a thirst for understanding new things, it's nice to know that was a design of our schooling.

Publication is not always the goal, he says. For example, in WWII, the Radiation Lab did research in the national interest with over 4,000 people, designing over 100 radar systems. He showed a quote from Admiral Karl Doenitz from December 1943:

"The enemy has rendered the U boat war ineffective. He has achieved his object not through superior tactics or strategy, but through superiority in the field of science; this finds its expression in the modern battle weapon: detection. By this means he has torn our sole offensive weapon in the war against the Anglo-Saxons from our hands."

Then there was a break before the next session on "Engineering Human-Machine Relationships".

Bill Poduska and Prof. Fernando Corbato at the break, Prof. Barbara Liskov, associate Department Head for Computer Science introducing speakers

Kresge Auditorium filled with people

Professors Barbara Liskov, Martin Schmidt, Leslie Kaelbling, Victor Zue, and Rodney Brooks

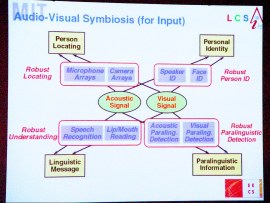

Victor Zue spoke about how using multiple inputs (specifically acoustic and visual) programs are better able to figure things out, such as locating the speaker on a tape or identifying a person. Here is a slide from his talk:

He said: "Anthropomorphic interfacing coming".

The next talk was "Learning to Live with Uncertainty" by Leslie Pack Kaelbling at the AI Lab.

"Uncertainty makes hard problems no longer a 'mere' problem of implementation." Examples: planetary science robot trying to decide where to drill, or a shopping program knowing what you like and what times are best for delivery.

"How to handle uncertainty? Reduce it: perceive the state of the world, learn its regularities. Embrace it: know what you don't know, and act accordingly."

Some examples of robot-oriented, and assistant-oriented learning (walking down hallways, scheduling, etc.).

Learning will be an important component of future applications, she says.

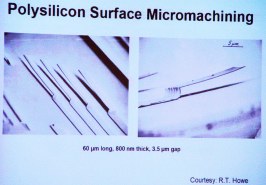

Prof. Martin A. Schmidt of the Microsystems Technology Laboratory talked about the role of tiny technologies.

"A system is more than just electronics!" Input, output, communications. Showed examples of tiny devices, including DLP, accelerometers, microphones, pressure sensors, drug delivery systems, etc.

Electric power is important, so making smaller, lower power devices. Also, finding ways to "scavenge" energy from mechanical vibrations. Heel-strike harvesting in shoes, and a micro-gas turbine (a portable power source with 10 times the power density of state-of-the-art batteries).

He pointed out that engineering challenges of the 20th century were of increasing scale (bridges, dams, airplanes, rockets). The engineering challenges of the 21st Century will be of decreasing scale. Their clean room lab for creating tiny devices has become the EECS department's "New Machine Shop". Usage went from 40 users in 1987 to over 350 last year.

The opportunity is "unlimited, inexpensive, miniature devices for sensing, actuation, and communications."

Prof. Rodney Brooks talked about other AI developments, with video demonstrations of Kismet and other robots, and work from the KneeLab. The videos are worth watching: See Kismet, and the Intelligent Prosthetic Knee (page, video).

My reactions and questions:

I found the philosophical material about the EECS department quite interesting and meaningful. The Human-Machine Relationships stuff, though, bothered me. I've written about this before, in reaction to news reports about some research, in my "Metaphors, Not Conversations" and "The 'Computer as Assistant' Fallacy" essays.

What bothered me wasn't the research. I think it is good and important research. It was the thinking about creating walking/talking robots rather than individual technologies that can be used in many other ways (except in the Microsystems area, which seems to very much have a "basic ingredient" type of thinking). In reality, as seen with the Intelligent Prosthetic Knee, the research ends up being applied in ways that are more like tools. I saw, in my listening to what was being done, how the learning research can be used to help sensors that are part of other systems act more as you would expect. (This is like an edge detection algorithm in an image manipulation program "doing the right thing".) I saw the "natural" conversation body language of Kismet teaching us techniques for making tools that have better feedback to their users, with video cameras showing what they are looking at and reacting to what they see.

When Prof. Zue talked about his conversation systems, he kept talking about "mobile" as the reason for that type of interaction. I think he's slightly off base here. It isn't mobile that he's dealing with. It's short, not too deep interactions with small interfaces. Long, deep interactions usually take up all of your attention, and lend themselves to sitting down and concentrating on a display of some sort. They are hard to do when moving and negotiating stairs or traffic. However, I can use "mobile" devices in a plane, train, or coffee shop, and they are fine for more traditional interactions. Quick, acoustic interactions also are useful in a "fixed", at your desk, situation. Again, a fixation on "talking robot" and an ignoring of building tools and thinking that way.

I asked a question during the Q&A: "I was surprised that I didn't hear a common word from my days at MIT: Tools. We hear about building 'assistants', but not the tools that supposedly distinguish us as being human. Any comments?" [Note: In my day, "tool" meant to study real hard at MIT, and a person who was a "tool" was one who studied an awful lot. I used that as a reason to choose that particular term, but I meant it in the "screw driver and hammer" sense.]

The reaction from the panel? Basically silence. Really. They had little to say about tools. There was some short thing about "agents doing something on their own...", but no canned thinking (or off the cuff thinking) about how their research would create tools for society. No mention of ever thinking the word "tool". To me, who describes myself as a tool builder and is a graduate that the department likes to brag about because of his contribution to society in that regard, this is very sad, so I write this here to help open a dialog.

In the hall:

When I walked out into the hall after the presentation, on the way to lunch, I saw someone demonstrating a Segway. (I've written lots about the device.) Who should I see trying it, but none other than Jay Forrester. He headed the Whirlwind project at MIT (over 50-some years ago, before I was born), the granddaddy of realtime digital computing. He did work on feedback systems, both electronic, mechanical, and economic (with systems dynamics). Here he was, for the first time on a great example of realtime computing and feedback, the Segway. I had to take a picture and share it:

Jay Forrester on a Segway HT

Since I now had a chance to get on a Segway for the first time in about a year, there was something I wanted to try. I took out my 10+ pound computer bag and swung it out while standing still on the Segway. My feet stayed steady, just as if I was standing on the ground:

Holding a heavy bag while standing on a Segway -- not like a bicycle or a scooter at all

That's it!

-Dan Bricklin, 2 June 2003

|

|

|

© Copyright 1999-2018 by Daniel Bricklin

All Rights Reserved.

See disclaimer on home page.

|